The technological development of self-driving vehicles entails ethical issues as well as judicial issues concerning government policy, traffic regulations, and technical standards.

Judicial issues

Crash responsibility and liability is an important judicial issue in crashes with self-driving vehicles. Currently, drivers of commercially available, partly self-driving vehicles are obliged to keep their hands on the steering wheel and they are always responsible for safety. For a crash, therefore, the driver will usually be held liable instead of the developer or producer of the system. When higher-level self-driving vehicles become commercially available, and most or all tasks are carried out by the vehicle, it will technically be possible to let go of the steering wheel for quite some time. To offer drivers this possibility as a properly embedded option, legislation will first have to be modified. The Netherlands Road Authority is currently examining how legislation concerning self-driving vehicle needs to be adapted [33].

Ethical issues

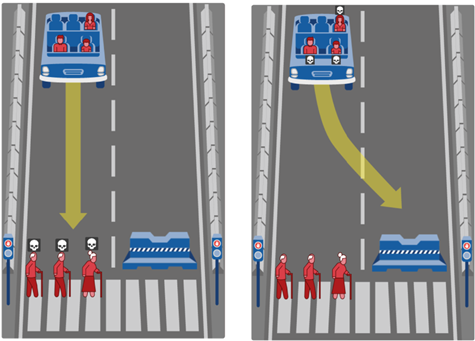

Important ethical issues are also involved in the introduction of self-driving vehicles. Ideally, a self-driving vehicle should always make the appropriate decision, even in emergencies. Yet, it remains to be seen whether this is feasible, and if not, on what grounds choices will then have to be made [34]. Should the vehicle protect its occupants or rather other road users? Does the decision depend on the number of road users that are at risk, or who is at risk? An extreme example in the Moral Machine Experiment by Awad et al. [35] illustrates this (see Figure 1).

Figure 1. Ethically speaking, what is the correct decision? An extreme example: what if a completely self-driving vehicle with three occupants loses control and is no longer able to brake. The vehicle for which the traffic light is green approaches a pedestrian crossing. Three older pedestrians are crossing the road in spite of the pedestrian red light. If the vehicle keeps its lane, it will hit the three older pedestrians. The vehicle may swerve to the other lane but, in that case, it will hit a concrete road block endangering the three occupants. From: Awad et al., 2018 [35]; see: https://www.nature.com/articles/s41586-018-0637-6/figures/1).

The example is, of course, extreme, but in less risky situations decisions will also need to be made about how to distribute risks across the parties involved. Car manufacturers and policy makers are struggling with such dilemmas that are hard to resolve with the current ethical principles [35]. There is a strong need for developing moral algorithms that can cope with such dilemmas in accordance with acceptable ethical standards [34].