Below you will find the list of references that are used in this fact sheet; all sources can be consulted or retrieved. Via Publications you can find more literature on the subject of road safety.

[1]. NHTSA (2021). Automated Vehicles for Safety. National Highway Traffic Safety Administration, NHTSA, Washington D.C. Accessed on 10-08-2021 at www.nhtsa.gov/technology-innovation/automated-vehicles-safety.

[2]. ERTRAC (2019). Connected automated driving roadmap. European Road Transport Research Advisory ERTRAC, Brussels.

[3]. Tabone, W., Winter, J. de, Ackermann, C., Bärgman, J., et al. (2021). Vulnerable road users and the coming wave of automated vehicles: Expert perspectives. In: Transportation Research Interdisciplinary Perspectives, vol. 9, p. 100293.

[4]. SAE (2021). Surface vehicle recommended practice: Taxonomy and definitions for terms related to driving automation systems for on-road motor vehicles. SAE J 3016-2021. SAE International.

[5]. Tesla (2021). Autopilot. Accessed on 01-10-2021 at www.tesla.com/nl_NL/autopilot.

[6]. NHTSA (2008). National motor vehicle crash causation survey. Report DOT HS 811 059. National Highway Traffic Safety Administration, NHTSA, Washington, D.C.

[7]. Milakis, D., Arem, B. van & Wee, B. van (2017). Policy and society related implications of automated driving: A review of literature and directions for future research. In: Journal of Intelligent Transportation Systems, vol. 21, nr. 4, p. 324-348.

[8]. Hagenzieker, M.P. (2015). 'Dat paaltje had ook een kind kunnen zijn'. Over verkeersveiligheid en gedrag van mensen in het verkeer. Intreerede 21 oktober 2015 ter gelegenheid van de aanvaarding van het ambt van hoogleraar Verkeersveiligheid aan de faculteit Civiele Techniek en Geowetenschappen van de Technische Universiteit te Delft. TU Delft, Delft.

[9]. SWOV & RAI (2018). Veilig onderweg met de auto. SWOV, Den Haag.

[10]. Milakis, D., Snelder, M., Arem, B. van, Wee, B. van, et al. (2017). Development and transport implications of automated vehicles in the Netherlands: scenarios for 2030 and 2050. In: European Journal of Transport and Infrastructure Research, vol. 17, nr. 1.

[11]. Bazilinskyy, P., Kyriakidis, M., Dodou, D. & Winter, J. de (2019). When will most cars be able to drive fully automatically? Projections of 18,970 survey respondents. In: Transportation Research Part F: Traffic Psychology and Behaviour, vol. 64, p. 184-195.

[12]. Overheid.nl (2005). Besluit ontheffingverlening exceptioneel vervoer. Accessed on 17-03-2022 at wetten.overheid.nl/BWBR0018680/2015-07-01.

[13]. Overheid.nl (2019). Regeling vergunningverlening experimenten zelfrijdende auto. Accessed on 17-03-2022 at wetten.overheid.nl/BWBR0042343/2019-07-01.

[14]. Hoekstra, A.T.G. & Mons, C. (2020). Advisering over praktijkproeven met zelfrijdende voertuigen. Herziening risicomatrix en lessen uit eerdere proeven. R-2020-14. SWOV, Den Haag.

[15]. Jansen, R.J., Mons, C., Hoekstra, A.T.G., Louwerse, W.J.R., et al. (2019). Advies praktijkproef. HagaShuttle. R-2019-10. SWOV, Den Haag.

[16]. Rijksoverheid (2016). Declaration of Amsterdam ‘Cooperation in the field of connected and automated driving’

[17]. OvV (2019). Veilig toelaten op de weg. Lessen naar aanleiding van het ongeval met de Stint. Onderzoeksraad voor Veiligheid, OvV, Den Haag.

[18]. Victor, T.W., Tivesten, E., Gustavsson, P., Johansson, J., et al. (2018). Automation expectation mismatch: Incorrect prediction despite eyes on threat and hands on wheel. In: Human Factors, vol. 60, nr. 8, p. 1095-1116.

[19]. Carsten, O. & Martens, M.H. (2019). How can humans understand their automated cars? HMI principles, problems and solutions. In: Cognition, Technology & Work, vol. 21, nr. 1, p. 3-20.

[20]. Dixon, L. (2020). Autonowashing: The greenwashing of vehicle automation. In: Transportation Research Interdisciplinary Perspectives, vol. 5, p. 100113.

[21]. Aramrattana, M., Habibovic, A. & Englund, C. (2021). Safety and experience of other drivers while interacting with automated vehicle platoons. In: Transportation Research Interdisciplinary Perspectives, vol. 10, p. 100381.

[22]. IenM (2015). Brochure European Truck Platooning Challenge. Minister of Infrastructure and the Environment, Den Haag.

[23]. Ensemble (2021). Platooning together. Accessed on 19-11-2021 at https://platooningensemble.eu/.

[24]. Axelsson, J. (2017). Safety in vehicle platooning. A systematic literature review. In: IEEE Transactions on Intelligent Transportation Systems, vol. 18, nr. 5.

[25]. Biondi, F., Alvarez, I. & Jeong, K.-A. (2019). Human–vehicle cooperation in automated driving: A multidisciplinary review and appraisal. In: International Journal of Human–Computer Interaction, vol. 35, nr. 11, p. 932-946.

[26]. Litman, T. (2022). Autonomous vehicle implementation predictions: Implications for transport planning. Victoria Transport Policy Institute, VTPI.

[27]. Paddeu, D., Parkhurst, G. & Shergold, I. (2020). Passenger comfort and trust on first-time use of a shared autonomous shuttle vehicle. In: Transportation Research Part C: Emerging Technologies, vol. 115, p. 102604.

[28]. Hagenzieker, M., Boersma, R., Velasco, P.N., Ozturker, M., et al. (2020). Automated buses in Europe: An inventory of pilots. TU Delft, Delft.

[29]. Oikonomou, M.G., Orfanou, F.P., Vlahogianni, E.I. & Yannis, G. (2020). Impacts of autonomous shuttle services on traffic, safety and environment for future mobility scenarios. In: IEEE 23rd International Conference on Intelligent Transportation Systems. p. 1-6.

[30]. Lengyel, H., Tettamanti, T. & Szalay, Z. (2020). Conflicts of automated driving with conventional traffic infrastructure. In: IEEE Access, vol. 8.

[31]. Hetzer, D., Muehleisen, M., Kousaridas, A., Barmpounakis, S., et al. (2021). 5G connected and automated driving: use cases, technologies and trials in cross-border environments. In: EURASIP Journal on Wireless Communications and Networking, vol. 2021, nr. 1, p. 97.

[32]. Czarnecki, K. (2019). Software engineering for automated vehicles: Addressing the needs of cars that run on software and data. In: 2019 IEEE/ACM 41st International Conference on Software Engineering: Companion Proceedings (ICSE-Companion). IEEE. p. 6-8.

[33]. Rizoomes (2020). De veiligheid van de zelfrijdende auto. Accessed on 17-8-2021 at www.rizoomes.nl/veiligheidskunde/de-veiligheid-van-zelfrijdende-autos/.

[34]. Barabás, I., Todoruţ, A., Cordoş, N. & Molea, A. (2017). Current challenges in autonomous driving. In: IOP conference series: materials science and engineering. IOP Publishing. p. Vol. 252, No. 251, p. 012096.

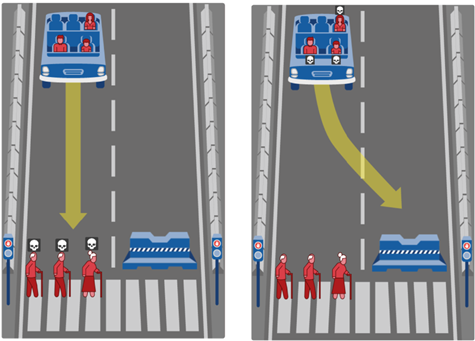

[35]. Awad, E., Dsouza, S., Kim, R., Schulz, J., et al. (2018). The Moral Machine experiment. In: Nature, vol. 563, nr. 7729, p. 59-64.

[36]. Sarter, N.B. & Woods, D.D. (1995). How in the world did we ever get into that mode? Mode error and awareness in supervisory control. In: Human Factors, vol. 37, nr. 1, p. 5-19.

[37]. Lee, J.D. & See, K.A. (2004). Trust in automation: designing for appropriate reliance. In: Human Factors, vol. 46, nr. 1, p. 50-80.

[38]. Parasuraman, R. & Riley, V. (1997). Humans and automation: Use, misuse, disuse, abuse. In: Human Factors, vol. 39, nr. 2, p. 230-253.

[39]. Matthews, G., Wohleber, R., Lin, J. & Panganiban, A.R. (2019). Fatigue, automation, and autonomy. Challenges for operator attention, effort, and trust. In: Mouloua, M., Hancock, P.A. & Ferraro, J. (red.), Human performance in automated and autonomous systems. Current theory and methods. CRC Press, Boca Raton.

[40]. Stapel, J., Mullakkal-Babu, F.A. & Happee, R. (2019). Automated driving reduces perceived workload, but monitoring causes higher cognitive load than manual driving. In: Transportation Research Part F: Traffic Psychology and Behaviour, vol. 60, p. 590-605.

[41]. Navarro, J. (2019). A state of science on highly automated driving. In: Theoretical Issues in Ergonomics Science, vol. 20, nr. 3, p. 366-396.

[42]. Howard, D. & Dai, D. (2014). Public perceptions of self-driving cars: The case of Berkeley, California. In: Transportation Research Board 93rd annual meeting, vol. 14, nr. 4502, p. 1-16.

[43]. Talebian, A. & Mishra, S. (2018). Predicting the adoption of connected autonomous vehicles. A new approach based on the theory of diffusion of innovations. In: Transportation Research Part C: Emerging Technologies, vol. 95, p. 363-380.

[44]. Leicht, T., Chtourou, A. & Ben Youssef, K. (2018). Consumer innovativeness and intentioned autonomous car adoption. In: The Journal of High Technology Management Research, vol. 29, nr. 1, p. 1-11.

[45]. Vissers, L., Kint, S. van der, Schagen, I. van & Hagenzieker, M. (2016). Safe interaction between cyclists, pedestrians and automated vehicles; What do we know and what do we need to know? R-2016-16. SWOV, The Hague.

[46]. Nuñez Velasco, P., Farah, H., Arem, B. van & Hagenzieker, M. (2017). Interactions between vulnerable road users and automated vehicles: A synthesis of literature and framework for future research. In: Proceedings of the Road Safety and Simulation International Conference. p. 16-19.

[47]. Vlakveld, W., Kint, S. van der & Hagenzieker, M.P. (2020). Cyclists’ intentions to yield for automated cars at intersections when they have right of way: Results of an experiment using high-quality video animations. In: Transportation Research Part F: Traffic Psychology and Behaviour, vol. 71, p. 288-307.

[48]. Vlakveld, W.P. & Kint, S. van der (2018). Hoe reageren fietsers op zelfrijdende auto’s? Gedragsintenties bij ontmoetingen op kruispunten. R-2018-21. SWOV, Den Haag.

[49]. Chang, C.M., Toda, K., Sakamoto, D. & Igarashi, T. (2017). Eyes on a car: an interface design for communication between an autonomous car and a pedestrian. In: Proceedings of the 9th international conference on automotive user interfaces and interactive vehicular applications. p. 65-73.

[50]. Gouy, M., Wiedemann, K., Stevens, A., Brunett, G., et al. (2014). Driving next to automated vehicle platoons: How do short time headways influence non-platoon drivers’ longitudinal control? In: Transportation Research Part F: Traffic Psychology and Behaviour, vol. 27, p. 264-273.

[51]. Schoettle, B. & Sivak, M. (2015). A preliminary analysis of real-world crashes involving self-driving vehicles. UMTRI-2015-34. University of Michigan Transportation Research Institute.

[52]. Naujoks, F., Wiedemann, K., Schömig, N., Hergeth, S., et al. (2019). Towards guidelines and verification methods for automated vehicle HMIs. In: Transportation Research Part F: Traffic Psychology and Behaviour, vol. 60, p. 121-136.

[53]. Dey, D., Habibovic, A., Löcken, A., Wintersberger, P., et al. (2020). Taming the eHMI jungle: A classification taxonomy to guide, compare, and assess the design principles of automated vehicles' external human-machine interfaces. In: Transportation Research Interdisciplinary Perspectives, vol. 7, p. 100174.

[54]. MEDIATOR (2021). MEdiating between Driver and Intelligent Automated Transport Systems on Our Roads. Accessed on 16-08-2021 at mediatorproject.eu/.

[55]. Petermeijer, S.M., Tinga, A.M., Reus, A. de, Jansen, R.J., et al. (2021). What makes a good team? – Towards the assessment of driver-vehicle cooperation. AutomotiveUI.

[56]. Regan, M., Prabhakharan, P., Wallace, P., Cunningham, M.L., et al. (2020). Education and training for drivers of assisted and automated vehicles. AP-R616-20. Austroads.

[57]. Vlakveld, W.P. & Wesseling, S. (2018). ADAS in het rijexamen. Vragenlijstonderzoek onder rijschoolhouders en rijexaminatoren naar moderne rijtaakondersteunende systemen in de rijopleiding en het rijexamen voor rijbewijs B. R-2018-20. SWOV, Den Haag.